If that trend can be continued, and quantum computers can be increased in scale, then they will be capable of computation that would be impossible on even the most powerful classical computers. Now, Google has shown that logical qubits can be increased in size and that this scale brings a reduction in the overall error rate. Subsequent work at the Joint Quantum Institute in Maryland managed to reach a point where logical qubits didn’t worsen error rates, albeit at a technical rather than practical level. Google demonstrated this when it first announced a working error correction scheme in 2021, which resulted in a net increase in errors. “So far, when engineers tried to organise larger and larger ensembles of physical qubits into logical qubits to reach lower error rates, the opposite happened,” says Hartmut Neven at Google. This means that adding more physical qubits to your logical qubit can actually be detrimental. This new version of quantum theory is even stranger than the original This is how much of the error correction in classical computers works, but in quantum computers there is an added complication because each qubit exists in a mixed superposition of 0 and 1 and any attempt to measure them directly destroys the data. One popular approach to this is called surface code correction, in which many physical qubits work as one so-called logical qubit, essentially introducing redundancy. But today’s qubits are susceptible to interference and errors that must be identified and corrected if we want to build quantum computers large enough to actually tackle real-world problems. The building blocks of a quantum computer are qubits, akin to the transistors in a classical computer chip.

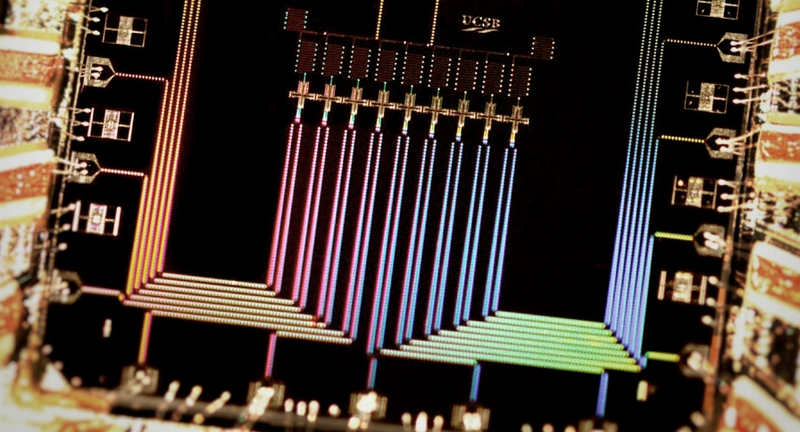

Google has demonstrated that its approach to quantum error correction – seen as an important part of developing useful quantum computers – is scalable, giving researchers at the company confidence that practical devices will be ready in the coming years. Google’s quantum computer (left) can correct its own errors

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed